Regularisation Can Mitigate Poisoning Attacks: A Novel Analysis Based on Multiobjective Bilevel Optimisation

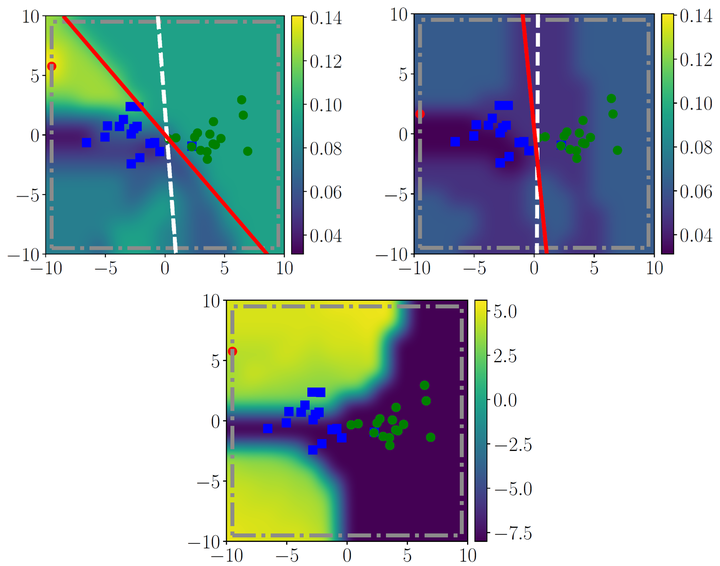

Effect of regularisation on a synthetic binary classification problem.

Effect of regularisation on a synthetic binary classification problem.

Abstract

Machine Learning (ML) algorithms are vulnerable to poisoning attacks, where a fraction of the training data is manipulated to deliberately degrade the algorithms’ performance. Optimal poisoning attacks, which can be formulated as bilevel optimisation problems, help to assess the robustness of learning algorithms in worst-case scenarios. However, current attacks against algorithms with hyperparameters typically assume that these hyperparameters remain constant ignoring the effect the attack has on them. We show that this approach leads to an overly pessimistic view of the robustness of the algorithms. We propose a novel optimal attack formulation that considers the effect of the attack on the hyperparameters by modelling the attack as a multiobjective bilevel optimisation problem. We apply this novel attack formulation to ML classifiers using L2 regularisation and show that, in contrast to results previously reported, L2 regularisation enhances the stability of the learning algorithms and helps to mitigate the attacks. Our empirical evaluation on different datasets confirms the limitations of previous strategies, evidences the benefits of using L2 regularisation to dampen the effect of poisoning attacks and shows how the regularisation hyperparameter increases with the fraction of poisoning points.